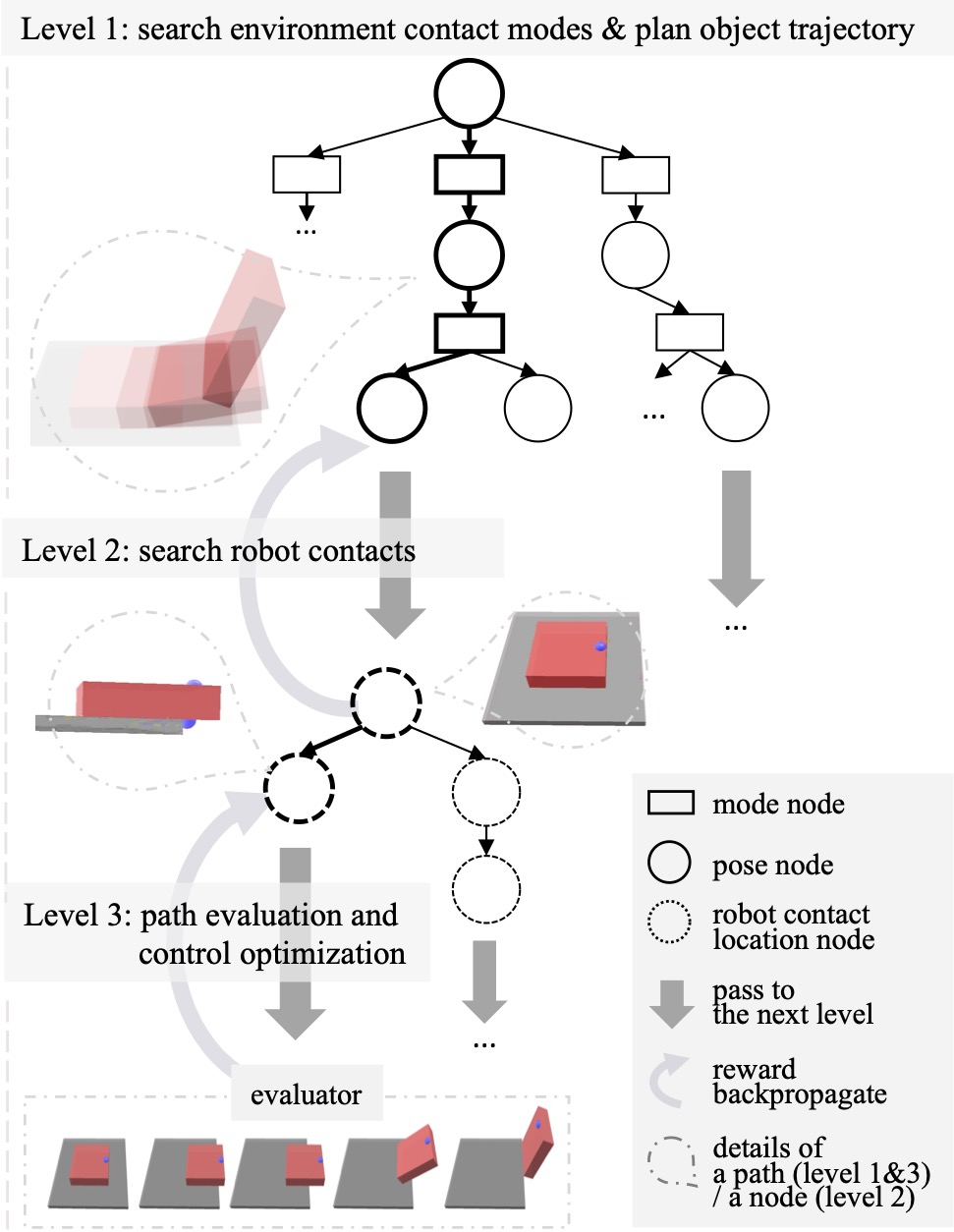

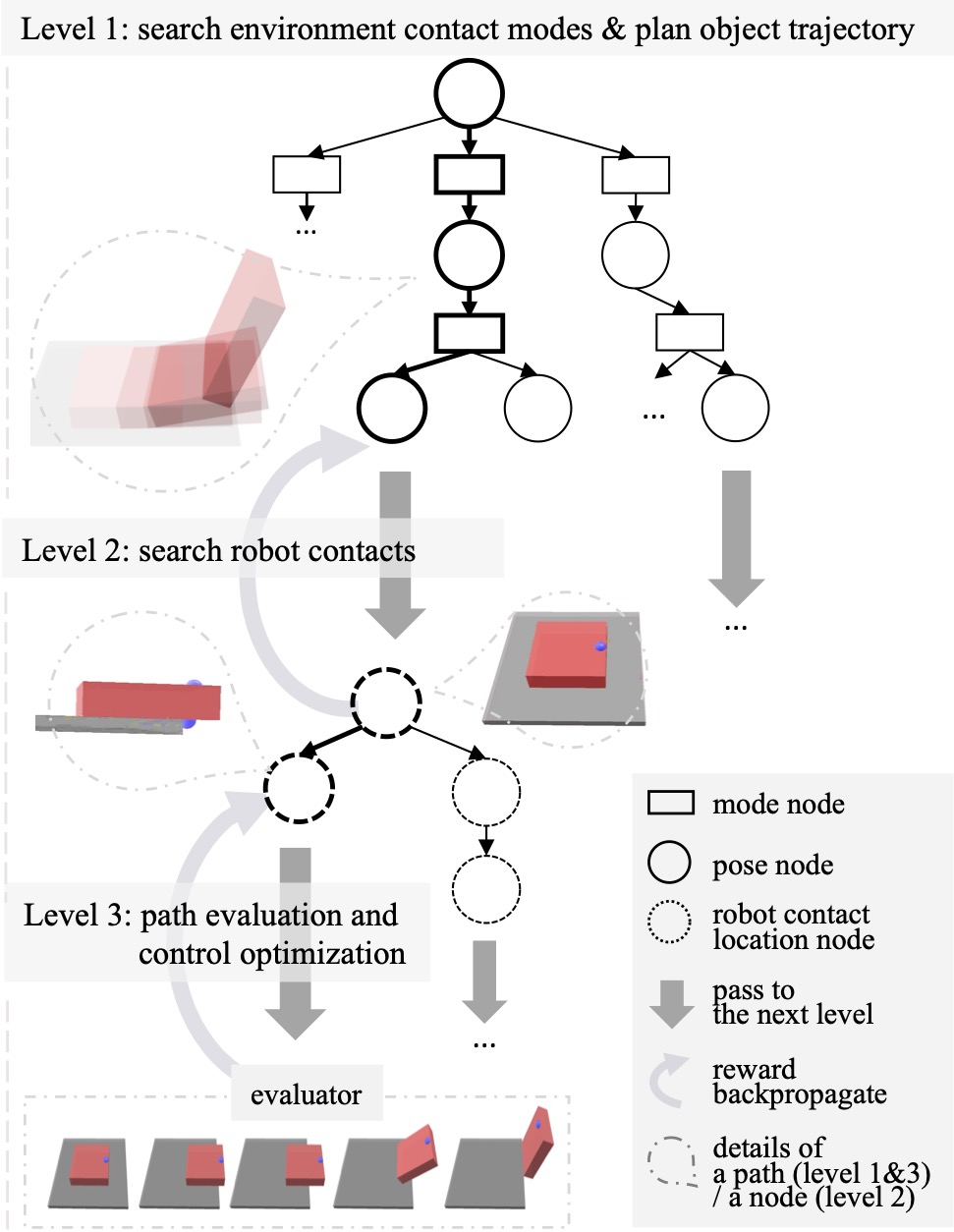

HiDex Method Overview

An overview of our framework, with an example of picking up a card. In the example, the robot pulls the card the edge of the table and then grasps it.

The following processes run iteratively.

Level 1 plans object trajectories, interleaving searches over discrete contact modes (▭ nodes) and continuous object poses (○ nodes). Level 1 is based on MCTS, and we employ Rapidly-exploring random tree (RRT) as the MCTS rollout to enhance the exploration of object configuration space.

An object trajectory is passed to Level 2 (⇩) to plan robot contact sequences on the object surface (◌ nodes). Level 2 is also based on MCTS. Our repsentation allows planning of robot contact transitions. Compared to explicitly planning robot contacts for each timestep, planning transitions largely increases the search efficiency.

The full trajectory of object motions and robot contacts is passed to Level 3 (⇩) for evaluation and control optimization.

After evaluation, Level 3 passes the reward back to the upper levels (↻). The reward is updated for every node in the path (bold nodes).